Grant Writing in Evaluation

Grant Writing in Evaluation

By Jessica Osborne, Ph.D.

Jessica is the Principal Evaluation Associate for the Higher Education Portfolio at The Center for Research Evaluation at the University of Mississippi. She earned a PhD in Evaluation, Statistics, and Measurement from the University of Tennessee, Knoxville, an MFA in Creative Writing from the University of North Carolina, Greensboro, and a BA in English from Elon University. Her main areas of research and evaluation are undergraduate and graduate student success, higher education systems, needs assessments, and intrinsic motivation. She lives in Knoxville, TN with her husband, two kids, and three (yes, three…) cats.

I’ve always been a writer. Recently, my mother gave (returned to) me a small notebook within which I was delighted to find the first short story I ever wrote. In blocky handwriting with many misspelled words, I found a dramatic story of dragons, witches, and wraiths, all outsmarted by a small but clever eight-year-old. The content of my writing has changed since then, but many of the rules and best practices remain the same. In this blog, I’ll highlight best practices in grant writing for evaluation, including how to read and respond to a solicitation, how to determine what information to include, and how to write clearly and professionally for an evaluation audience.

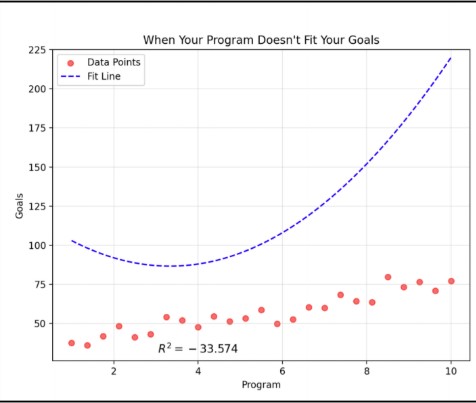

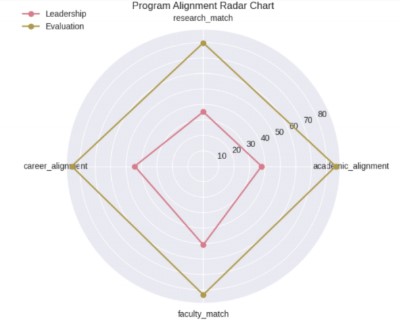

As an evaluator, you can expect to respond to proposals in many different fields or content areas: primary, secondary, and post-secondary education, health, public health, arts, and community engagement, just to name a few. The first step in any of these scenarios is to closely and carefully read the solicitation to ensure you have a deep understanding of project components, requirements, logistics, timeline, and, of course, budget. I recommend a close reading approach that includes underlining and / or highlighting the RFP text and taking notes on key elements to include in your proposal. Specifically, pay attention to the relationship between the evaluation scope and budget and the contexts and relationships between key stakeholders. In reviewing these elements and determining if and how to respond, make sure you see alignment between what the project seeks to achieve and your (or your team’s) ability to meet project goals. Also, be sure to read up on the funder (if you are not already familiar) to get a sense of their overarching mission, vision, and goals. Instances when you may not want to pursue funding include a lack of alignment between the project scope / budget and your team’s capacity or conflicts between you and the funder’s ethics, legal requirements, or overarching vision and mission.

Grant writing in evaluation typically takes two forms: responding as a prime (or solo) author to a request for proposal (RFP) or writing a portion of the proposal as a grant subrecipient. The best practices mentioned here are relevant for either of these cases; however, if working on a team as a subrecipient, you’ll also want to match your writing tone and style to the other authors.

When responding to an RFP, your content should evidence that you know and understand:

- the funder – who they are; why they exist;

- the funder’s needs – what they are trying to accomplish; what they need to achieve project goals;

- and most importantly, that you are the right person to meet their needs and help them achieve their goals.

For example, if you are responding to a National Science Foundation (NSF) solicitation, you will want to evidence broader impacts and meticulously detail your research-based methods (they are scientists who want to improve societal outcomes), how your project fits the scope and aims of the solicitation (the goals for most NSF solicitations are specific – be sure you understand what the individual program aims to achieve), and the background and experience for all key personnel (to evidence that you and your team can meet solicitation goals).

When considering content, be sure to include all required elements listed in the solicitation (I recommend double and triple checking!). If requirements are limited or not provided, at minimum be sure to include:

- an introduction highlighting your strengths as an evaluator and how those strengths match the funder’s and / or program’s needs

- a project summary and description detailing your recommended evaluation questions, program logic model, evaluation plan, timeline, approach, and methods

- figures and tables that clearly and succinctly illustrate key evaluation elements

When considering writing style and tone, stick to the three C’s:

- clear

- concise

- consistent

To achieve the three C’s, use active voice, relatively simple sentence structure, and plain language. Syntactical acrobatics containing opaque literary devices tend to obfuscate comprehension, and, while tempting to construct, have no place in evaluation writing. Also, please remember that the best writing is rewriting. Expect and plan for multiple rounds of revision and ask a colleague or team member to revise and edit your work as well.

And finally, a word on receiving feedback: in the world of evaluation grant writing, much like the world of academic publications, you will receive many more no’s than yes’s. That’s fine. That’s to be expected. When you receive a no, look at the feedback with an eye for improvement – make revisions based on constructive feedback and let go of any criticisms that are not helpful. When you receive a yes, celebrate, and then get ready for the real work to begin!